In this Talking tech column, Andy Coulson delves into the world of artificial intelligence to find out how it might be able to consider the use of conscious language or edit text in the future.

For this issue of The Edit my column is going to be a little different from normal. Usually, I try to highlight how technology can help you with the theme of the issue. This issue’s theme, conscious language, proves to be a bit of a challenge on that front. What I am going to do instead is to get the crystal ball out and do a bit of speculating about how technology might develop to help ensure more conscious language use.

Natural language processing

Natural language processing (NLP) is the term used to describe a field of computer science that covers developing computer systems to understand text and speech in a comparable way to a human. This is a branch of artificial intelligence (AI), and I will get into some more detail about that later. This enables tools like Google Translate or the digital assistants Siri or Alexa to work. This is the field from which any tools (or indeed our competitors!) will come that will be able to improve how conscious the language in a text is.

Just to simplify things (slightly) I am going to ignore speech and all the computational issues that speech recognition brings. Let us concentrate on text and look at how machines are taught to understand that and make decisions about how to respond to it. To date, a lot of the NLP development has focused more on teaching a machine to respond to some text, whereas what we are trying to think about is how a machine would understand and amend a text. Microsoft and Grammarly both use AI to help improve their editing tools, so you can be sure there are other tech companies experimenting with this.

While language is to a degree rule based, it is also full of subtleties and ambiguities. The rules allow tools like PerfectIt to work – we can describe and recognise patterns and so teach a machine to do this too. This only takes us so far, as NLP then needs to pick the text apart to find the meaning within it. It must undertake a range of tasks on the text to enable the computer to ‘understand’ it. These include:

- Speech or grammatical tagging, where the computer figures out the role of each word. This would be where it would identify ‘make’ being used as a verb (make a jacket) rather than a noun (the make of jacket).

- Recognising names, so it can identify a proper noun. It knows Lesley is likely to be someone’s name rather than a thing, so ‘picking Lesley up on the way’ can be interpreted in the right sense.

- Resolving co-references, where it relates a pronoun to a previously named object, so it recognises that ‘she’ is ‘Kathy’ from a previous sentence. This task can also be involved with dealing with metaphors or idioms – recognising that someone who is cold may not want an extra jumper but might not be much fun to talk to.

- Sentiment analysis, which is also known as opinion mining. Here the computer is attempting to recognise more hidden aspects of the text, such as whether the tone is positive or negative.

All of these, and other functions we would need in order to make judgements about how conscious the language used in a text is, do not lend themselves to rules. Rather, they rely on a knowledge of context and conventions. Acceptable language in a novel set in 1960s Alabama would be quite different from that used in a modern social sciences paper about the same city and its inhabitants, but understanding the context will frame and shape language choices.

How machines learn

So, we have realised we are not going to be able to fix this one with a clever macro. What sort of computation do we need? Step forward AI – a term that covers a number of fields that involve machines that mimic human intelligence. One of the main aspects of this that NLP uses is machine learning, a field of computing covering machines that learn a task or tasks through different approaches.

One of the best-known AI companies is Google’s DeepMind division. They have made a name for themselves by approaching AI from the perspective of learning to play games using machine learning. To understand how they have progressed in the field we need a bit of a history lesson.

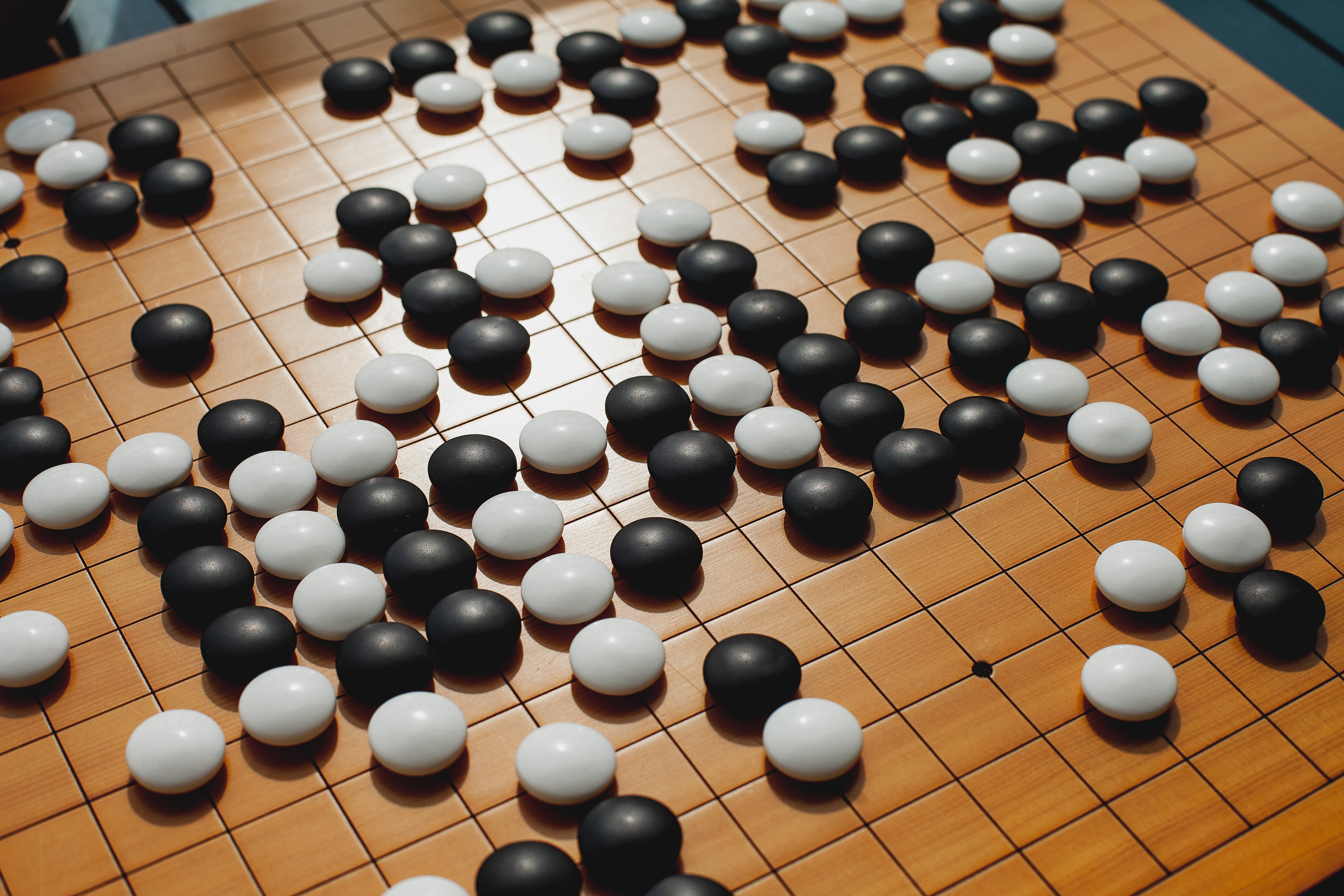

In 1997 an IBM project called Deep Blue beat the then World Chess Champion, Garry Kasparov. What Deep Blue did was to search all possible moves in the game and then pick the best next move. What is different about DeepMind’s AlphaGo is that they had to follow a different approach, as the game of Go has so many more possible moves than chess. This version of AlphaGo used neural networks (a brain-like arrangement of computing elements with lots of connections between each element) to compare the best move from the current position and the likelihood of winning from that move, which gave a more efficient way of narrowing down the choice of moves. AlphaGo was trained by playing vast numbers of games of Go to improve its ability to select moves and predict its current chance of winning. Eventually, in 2016, it beat Lee Sedol, widely regarded as one of the best players of all time.

DeepMind have since developed AlphaGo further and, instead of playing against experienced players, it learns from scratch by playing against itself. It uses a technique called reinforcement learning, where the system tries to optimise a reward called a Q-value. It has been able to play and master various video games from scratch (the Atari benchmark). Here AlphaGo tries to gain positive awards (and avoid negative ones) by, for example, collecting a game’s currency or surviving for a certain amount of time. It can then use the information about what it did and what reward it received to alter its strategy and see if that improves the Q-value.

Why is this important? It shows a progression from a very controlled environment with a limited (although large) number of variables, to a more complex one (Go) and then to a more generalised one (more varied games). We are still not at the point where this could be applied to a problem (like our language one) with very few constraints, but this certainly shows a progression. The latest version, AlphaZero, has apparently taught itself chess from scratch to a world champion level in 24 hours.

This technique of using neural networks and reinforcement learning seems to me to offer the potential to create tools with a more subtle understanding of learning. One issue that can cause problems is that AI often uses huge datasets to train the systems, but using already acquired data can bring with it historical problems. Microsoft created an AI chatbot for Twitter called Tay, designed to mimic the speech patterns of a 19-year-old girl, which it did very well right up to the point it learned to be inflammatory and offensive and had to be shut down. Microsoft believe that the trolling the bot experienced taught it how to be offensive. Similarly, Amazon developed an AI system to shortlist job candidates, and this showed a distinct bias against women. Amazon tracked the problem down to an underlying bias in the training data.

Given the increasing pressure on social media companies to filter offensive content, platforms like YouTube and Facebook are undoubtedly trying to use AI to recognise problematic language, and some of this may lead to tools we can use to highlight issues. However, as editors and proofreaders we are looking to improve poor language choices and make it more conscious. Looking at how the Editor function in MS Word and Grammarly have developed, they certainly point to a way forward. While I am not convinced a machine is going to take my job for some time, I can certainly see where it could make progress. I think the challenge of issues like conscious language is that they have too many subtleties, and the human ability to make judgements about these, and even to have a productive discussion with an author about a passage, means a human editor will continue to be able to add something a machine cannot to a piece of writing, for the foreseeable future.

About Andy Coulson

Andy Coulson is a reformed engineer and primary teacher, and a Professional Member of CIEP. He is a copyeditor and proofreader specialising In STEM subjects and odd formats like LaTeX.

Andy Coulson is a reformed engineer and primary teacher, and a Professional Member of CIEP. He is a copyeditor and proofreader specialising In STEM subjects and odd formats like LaTeX.

About the CIEP

About the CIEP

The Chartered Institute of Editing and Proofreading (CIEP) is a non-profit body promoting excellence in English language editing. We set and demonstrate editorial standards, and we are a community, training hub and support network for editorial professionals – the people who work to make text accurate, clear and fit for purpose.

Find out more about:

Photo credits: chess by Bru-nO on Pixabay, robot by mohamed_hassan on Pixabay, Go by Elena Popova on Unsplash.

Posted by Harriet Power, CIEP information commissioning editor.

The views expressed here do not necessarily reflect those of the CIEP.